Summary

This article describes in detail how to use on-line survey tools to validate your key startup assumptions, and gain actionable insights into topics such as pricing, target demographics, messaging, etc.

Introduction

By now pretty much every entrepreneur knows the basics of Lean Startup methodology: start by searching for product/market fit. Get out of the building and talk to customers. Run a series of experiments to validate your ideas. Above all else, validate your thinking as early as possible: don’t spend millions of dollars building something before you have tested the ideas and concepts with customers.

Where this tends to fall down in practice is that many entrepreneurs find it hard to reach real customers. Getting feedback from co-founders, friends, small focus groups, user testing sessions and even existing customers can be very helpful to qualitatively understand how others view your offering. But many times the sample group that you can reach is too small and biased towards people that will be polite to you, or who have self-identified as liking your product.

What if you could ask 1,000 potential customers about your product, new feature or idea? Would that help you make better decisions, create content or collateral, or gain important insights?

Many survey firms, including Survey Monkey discussed below, offer the ability reach a large specifically targeted audience that they have worked to identify. The following article was written by Brent Chudoba, General Manager of the SurveyMonkey Audience business, and describes how you might go about designing a survey, and interpreting the results, to gain actionable insights for your startup.

Background

My name is Brent Chudoba, I’m a VP at SurveyMonkey and General Manager of the SurveyMonkey Audience business, which provides on-demand respondents for our customers who need a targeted audience. My background, pre-SurveyMonkey, was in investing, and I worked for the investment firm Spectrum Equity, that acquired SurveyMonkey in a leveraged buyout in 2009. As an investor, quantifying a market opportunity was key to validating a business through diligence. Whether I realized it then or not, I’ve always been a researcher, only now I have a much better grasp of which tools are available to help people conduct more efficient and effective research. A big part my investment work was gathering company and industry data to form an investment thesis on the companies in my universe. As an operator, I’m still collecting data and trying to make good decisions as I help grow a business.

So how do you get quality feedback?

Learning about your own customers:

If you want to talk to your own customers, and understand product satisfaction, feature requests or anything else, a survey can be a great tool. You most likely have email addresses for your customers, or can provide a feedback link on your site, or even embed a survey in-product. However, your respondents are likely your biggest advocates who want to help you, or your least satisfied customers, who may want to complain. Surveying existing customers is no doubt a valuable exercise and can create preliminary benchmarks, but the focus of this article is on surveying non-customers, or people you may not immediately be able to access.

Learning about potential customers:

I always find it hard to generalize feedback programs without diving directly into a use case. I hope the following will help give you some ideas and inspiration for the topics that are most important to you when it comes to gathering feedback.

I have a friend who runs a startup called Modify Watches. It’s been around for two years, starting to grow nicely, and primed to add more resources and start spending money to grow. The company sells affordable watches that have interchangeable faces and bands, giving consumers hundreds of customization options. Modify Watches has a unique approach on accessories – you wear a different combination of pants and shirts most days, so why not switch up your watch face and band whenever you want to match your style or mood? The company has an e-commerce model with online as the primary sales channel. Modify could really benefit from talking to potential customers to understand its market opportunity, target customers, pricing tolerance and feature needs. Modify knows a lot of information about its business anecdotally and through customer data, but what about the potential customers that are harder to reach?

Since Modify is a startup focused on finding ways to gain new customers and is testing out different pricing, advertising and business model concepts, I asked my friend, “Why don’t you talk to a representative sample of US adults to see if they would buy your product, and what their pricing tolerance is on something like watches?”

My friend, the founder, was very interested. Apparently, Modify’s biggest problem (not dissimilar to most startups) is getting its product in the hands of more people. He told me, flat out, Modify has a great product, people love it, people evangelize it, but there aren’t enough people with the product on their wrists. To change this Modify had to decide how best to put resources to work on marketing campaigns, PR and partnership efforts to help jumpstart its growth and awareness.

The risk, from his perspective, is in having the confidence to expend resources and/or raise more capital to accelerate growth while relying on a relatively limited set of customer data and questions around business model approach, ideal customers, and price point.

So what’s a resource-efficient way to find data and insights around key business questions, in order to gain the confidence to push forward on growth and awareness efforts, while still staying nimble enough to pivot if needed?

Talk to potential customers. Determine the key business questions where feedback from a large audience would help you make better decisions. Using a survey or even series of recurring surveys to monitor trends can help find answers quickly, and help give you the confidence to sprint forward and grow your customer base.

The next section covers how you might run such a project and gives some examples of how to test some common topics faced by startups, using Modify as a specific example.

Using a survey and a targeted audience to make smart decisions

How do you get started?

Work backward. First, think of what you are going to do with the data once you have it. This will help you determine what exactly you need to ask, and how to ensure the data is usable. Starting by just typing out questions can actually prolong the process. I also recommend keeping goals narrow and focused. You can always run more survey projects, so don’t overthink the first project and try to address too many topics at once, which leads to longer surveys and more survey creation and QA time. You are also going to learn a lot every time you run a project, which will make each subsequent project more successful.

Create clear objectives

Key objectives for Modify:

- Understand if its core offering is priced appropriately

- The data (output of answer options) needs to allow Modify to clearly indicate where people think the current pricing is too high, too low, or about right

- Understand brand recognition (unaided and aided) in the market to get a sense of how they should position themselves in marketing/PR (and be able to cut this data based on watch/accessory budgets and demographics)

- Find out which types of customers are most likely to purchase its product

- Where are the demographic sweet spots in terms of pricing and interest?

- Get custom market stats it can use to build a TAM (total addressable market), sanity check its market understanding and build customized data for presentations, potentially for fundraising

- How frequently do people buy watches?

- About how much do people spend on watches?

- How likely are people to purchase a watch online?

- Understand key info around its new watch subscription offering to validate whether this is a business initiative it should focus on

- Are people interested in a subscription offering that allows them to get new watches on a regular or semi-regular basis?

- How much should they charge?

- How frequently do people want new watches?

Determine your target audience

Who do you want to survey?

For Modify, they are asking questions related to watches. Pretty much everyone in the world has one or wears one, particularly those who can afford Internet connectivity (project sample base/target population).

Sample size: 1,000 people should be enough to give us a sample size with high confidence

Targeting: US, gender balanced. We want this to look pretty similar to the US population. Everyone is a potential watch buyer that Modify wants to hear from.

Create a great survey

Turning key objectives into great questions:

Following is a sample survey of how Modify can achieve its feedback objectives by eliciting actionable data to help inform the business decisions it’s considering. The survey questions are shown below without the multiple choice options for answers.

A live version of the full survey can be found here: https://www.surveymonkey.com/s/R3Z8TZW. A PDF version of the survey can be found here: http://www.slideshare.net/SurveyMonkeyAudience/modify-watch-survey-0712

Modify Survey Questions:

WATCH BASICS

1) Do you wear a watch on a daily or frequent basis?

2) Approximately how many watches do you own?

3) How often do you purchase watches?

WATCH PRICING AND PURCHASING

4) On average, how much do you spend when purchasing a new watch?

5) Have you ever purchased a watch online?

6) How did you purchase your last watch?

7) When you think of watch brands, which brands come to mind?

AIDED BRAND AWARENESS

8.) Which of the following watch brands are you familiar with? (Select all that apply.) [randomize]

- Casio, Modify, Rolex, Omega, Cartier, Tag Heuer, Movado, Diesel, Timex, Starck, Fossil, None of the above, Other (please specify)

MODIFY WATCHES

Modify Watches is a cool new watch company seeking opinions and feedback. Our company sells great, interchangeable watches and prices watch straps and faces separately, in order to give customers ultimate choice. All of our straps and watch faces are interchangeable, so any band you buy can be mixed-and-match with any face. The bands are silicone, so swapping in new bands is easy, and the watches are made from high quality, water-resistant materials.

9) Based on the information above, how likely would you be to purchase a Modify Watch?

10) About how much would you be willing to pay for a Modify Watch face and band?

MODIFY PRICING

11) Modify prices its watches based on the price of a watch face ($25) and the price of a band ($15). The bands and faces are interchangeable, so various combinations can be created for customers who purchase multiple bands and/or faces. Do you think that Modify watch face and band pricing is expensive or inexpensive?

12) Modify has recently created a subscription offering where customers can sign up for a subscription to receive new watches periodically. Customers also receive a discount on each watch as part of the subscription package. How likely would you be to sign up for a subscription offering to receive new watches periodically at discounted prices?

INTERESTED IN SUBSCRIPTION

13) If you were to sign up for a watch subscription package, how frequently would you want to receive new watches?

14) If you were to sign up for a watch subscription package, who would you like to choose the watches you receive?

-

- I would like Modify Watches to choose new watches for me

-

- I would like to choose new watches myself

-

- I don’t know

-

- Other (please specify)

15) How much of a discount off of the base pricing for watch faces ($25) and bands ($15) would you require to sign up for a watch subscription package?

Uncovering Critical Insights

After launching the survey, results came in immediately. After just a couple of days, Modify was ready to begin analyzing its data, finding the key insights they needed to act on and help grow their business.

Since Modify used sound survey creation principles, the results and data are actionable and a key step in the process could begin. The topic we at SurveyMonkey ask a lot of customers or potential customers, and even ourselves, before, during and after running projects is, “Now that you have answers and data, what are you going to do with it?”

So, how is Modify going to use this data to help grow their business and make better decisions?

Actionable Insights for Modify

Objective: Understand pricing

Modify wanted to understand how much consumers were willing to pay for its watches. Two key questions it asked can unlock the answer to this critical topic. And, since the survey also asked respondents whether they would be interested in Modify watches after providing a description and image, the company can see reactions of likely customers by filtering out those who were not at all interested.

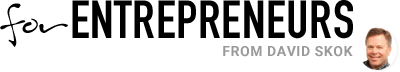

Question: On average, how much do you spend when purchasing a new watch?

Responses were mixed, but encouragingly, more than 80% said they spend $20+ when purchasing a watch and the weighted average price people spend was around $70 per watch, which makes sense given typical watch pricing. So, without yet exposing their current pricing, Modify has an unbiased view of what watch buyers are willing to pay for a new watch. Given their current pricing of around $40 per watch, this shows that they aren’t overpriced for the typical watch buyer and may have room to increase pricing over time as they keep a low entry point to gain market share and awareness.

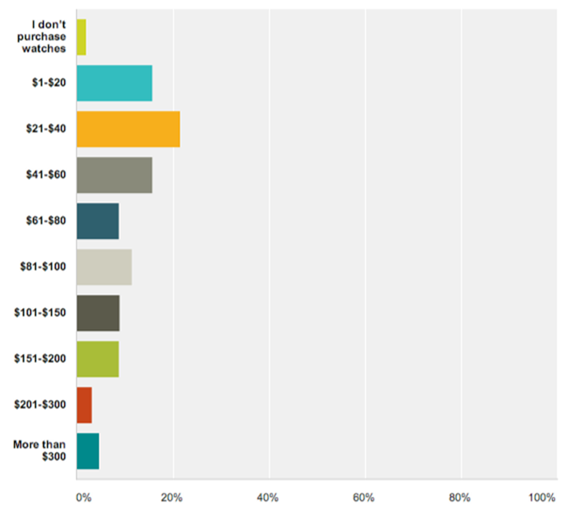

Question: Modify prices its watches based on the price of a watch face ($25) and the price of a band ($15). The bands and faces are interchangeable, so various combinations can be created for customers who purchase multiple bands and/or faces. Do you think that Modify watch face and band pricing is expensive or inexpensive?

After exposing some information about their product and pricing to those respondents who had indicated at least some interest in the Modify offering, Modify can see that potential customers felt that their pricing was already in the sweet spot of what customers were willing to pay. Around 30% of respondents felt the offering was priced appropriately and more than 60% felt it was appropriately priced or only slightly expensive or inexpensive. This is a major insight since it let Modify know they aren’t way off on pricing and that any campaigns and marketing efforts targeted to their target customers should be well received if executed well.

Pricing is right. Modify isn’t planning any major price changes, and spending time and resources on price testing isn’t a major concern that should limit Modify’s ability to grow the business.

Objective: Identify demographics of the Modify target customers

Modify had an initial sense of their target market demographics, largely based on founder knowledge of where their product has been well received so far, but this was highly anecdotal. By looking at the age and gender breakdowns of survey respondents who identified that they were ‘Extremely likely’ or ‘Very likely’ to purchase a Modify watch, the company can see if its assumptions and intuition were correct. Of course, Modify could also look at geography, income and a variety of other demographic attributes individually or in combination to build out a comprehensive analysis of key target groups, but getting an initial indication by looking at age and gender cross sections was the focus.

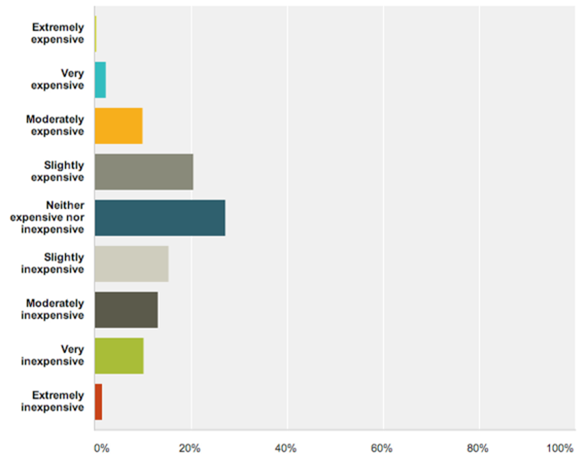

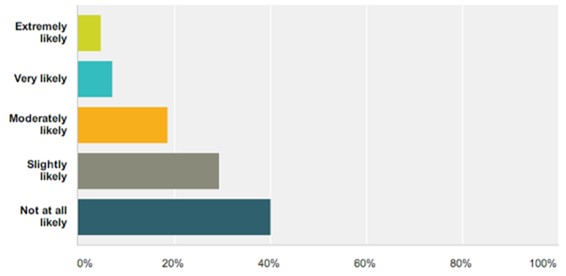

Question: Based on the information above, how likely would you be to purchase a Modify Watch?

Roughly 70% of people are at least slightly likely to purchase a Modify watch! Not bad for a startup watch brand.

Roughly 20% of customers indicated high likeliness to purchase a Modify watch (sum of the ‘Extremely likely’ and ‘Very likely’ respondents). So, drilling down on those answers and seeing which demographic groups expressed high likeliness helps pinpoint the types of customers who will be more likely to convert and become buyers.

Key demographic insights:

- Women are nearly 30% more likely to purchase than men

- People under the age of 30 are about 30% more likely to purchase than people 30 and above

- The categories that showed the strongest likelihood to purchase, in order:

- Females, under 18

- Males, under 18

- Males, 18-29

- Females, >60

- Males, 30-44

Modify was under the impression that people 18-29 of both genders, and females aged 29-60 would be a great target for them. This was mostly accurate, but the 60+ female group was a surprise, as was the strength in the <18 category. Creating offerings for, and targeting campaigns toward teenagers, young males and older females is going to be a recipe for success for Modify.

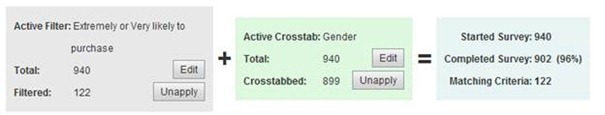

So how did they figure this out this actionable data for their business plans? By applying filters and crosstabs in their survey analysis and comparing the results vs. the overall population who answered the survey. An example of the filters and crosstabs applied are shown below.

Objective: Understand if a subscription option is viable

Question: How likely would you be to sign up for a subscription offering to receive new watches periodically at discounted prices?

Around 60% of people indicated they would be at least slightly likely to sign up for a Modify watch subscription. It’s always difficult to judge what people will actually do vs. what they say they will do, but this gives a strong indication that a subscription offering is in fact viable. Another great comparison point is that roughly 70% of people said they were at least slightly likely to purchase a Modify watch, so a subscription offering resonates with a majority of those willing to purchase the product.

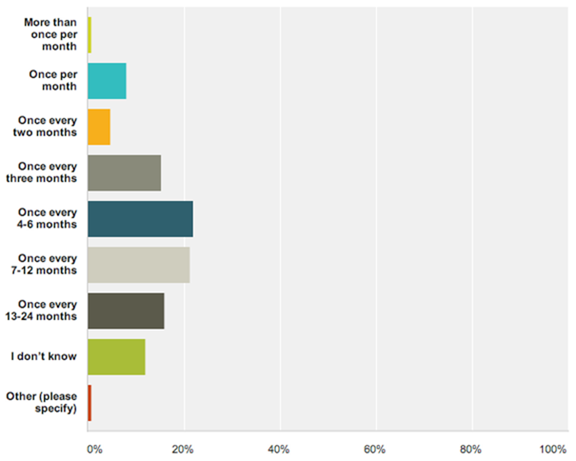

Question: If you were to sign up for a watch subscription package, how frequently would you want to receive new watches?

A key question in the decision set on creating a subscription offering, and an answer that skewed quite a bit from Modify’s initial thinking. Modify had initially planned for a monthly subscription, and respondents indicate that a frequency of 3-9 months seems to be a much better fit to attract a subscription commitment. Modify believes that gifting watches could be a great reason for people to purchase a more frequent subscription term, but focusing marketing on gifts, and perhaps extending the term out to every 2 or 3 months instead of monthly may be a big win to gain more subscribers and to retain them for longer.

Objective: Understand market sizing

How big is the market?

We know that watches are popular. You are sure to see them on the wrists of most people, but what percent of the population owns a watch, how many do they own on average, and how many are willing to purchase watches online? The answers to these questions are certain to go into any pitch deck Modify has for investors, and may underpin their market sizing as they build a financial model to go seize the opportunity.

Question: Do you wear a watch on a daily or frequent basis?

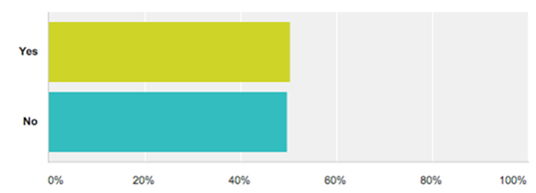

A near dead heat, about half of people wear a watch on a regular basis – but how many do they own?

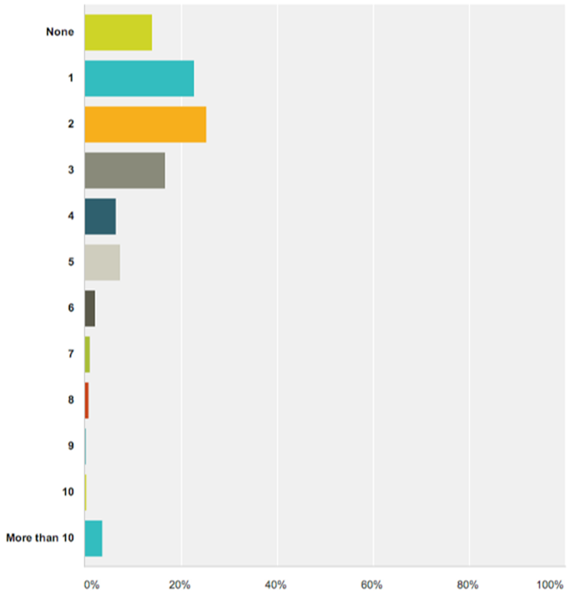

Question: Approximately how many watches do you own?

People own on average, about two watches, including those who don’t regularly wear one. When you filter out the respondents who don’t wear a watch regularly, they own an average over at least three watches.

Who are the most recognizable, memorable brands?

We’ve all heard of many of the largest major watch manufacturers, but who is Modify positioning itself against and what smaller brands are gaining share that they can learn from?

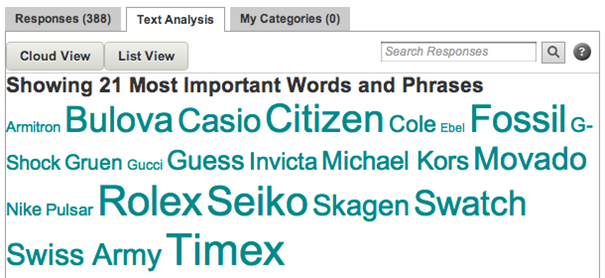

Question: When you think of watch brands, which brands come to mind?

This is a great unaided brand awareness question. Many of the strong brand names show up, with Timex and Rolex leading the way, but lots of interesting companies showed up and Modify now has a great baseline to test awareness and see how the landscape shifts over time, and a great way to monitor if any core competitors start to gain market share.

Looking at these three key market questions, we find that watches are relevant to at least half of the population, the majority of who own at least two watches. From other survey questions we found that they spend about $70 per watch. So, in the US alone, using those metrics we have more than $20 billion in watches on people’s wrists or in their dresser drawers and jewelry cases. If Modify can gain more awareness, there is quite a bit of discretionary money being spent on watches that it is trying to win.

Putting results into action

Now that the results are in, analyzed and buttoned up, what is Modify going to do to make full use of this great project?

Pricing

Keep it the same. Data indicates that Modify watches are priced appropriately for its core offering. If anything, Modify has room to increase pricing, but should keep pricing as affordable as possible as it gains brand awareness and share in its key demographic target areas. Lowering pricing could result in trivializing the brand/product since consumers indicated that pricing was generally about right for the product offering.

Brand Recognition

As expected, the popular brands are well known. But the landscape seems wide open. This question and set of answers was more important to set a benchmark for future projects than to understand the landscape. However, given the open-ended format of the question, several smaller competitors were surfaced which can be helpful references for Modify to check out pricing and positioning.

Demographics

Those most likely to purchase a Modify watch are widespread, but noticeable clusters emerge that should be the target of new marketing campaigns and product efforts. Target groups for future campaigns and product design initiatives should include or consider:

- Teenage males & females: social media campaigns could be more effective than expected, pop culture watch face designs could resonate

- Young males (18-29): media formats with high exposure to young males (e.g., men’s magazines, gaming sites) could be fruitful, sports or demo focused watch faces could resonate

- Older females (60+): TV or other media avenues with a highly female or older demographic could be beneficial, certain colors or designs on faces/bands could resonate

Subscription Viability

Thumbs up for Modify. Respondents, and those who were likely to purchase seemed to embrace the idea of a subscription. While the cadence of Modify’s initial subscription offering (monthly) may be too frequent, moving to a 2-3 month subscription and using a slight discount off base pricing is likely to be a success, and worth the work pushing out the offering and working through all the billing and marketing projects required.

Market Sizing

It’s big. No surprises there. Rough stats can peg the watch market at $20BN in watches sitting at home and on wrists. And more importantly, watches are relevant to more than half of Americans who either own at least one or wear one on a frequent basis. It’s not a revelation, but it is hard data that makes creating a pitch deck or advertisement that much easier.

I hope this in depth case study and discussion on feedback can serve as a helpful template or reference point for any feedback work you may do. There are lots of fantastic web tools that can be used to create, collect and analyze survey data, and also many ways to find the appropriate respondents to help get you the opinions and feedback to help accelerate your own decision making and business growth. For this article and the Modify project, the SurveyMonkey survey tool was used to create the survey and analyze the results and SurveyMonkey Audience was used as the data source. However there are other good online survey tools out there such as Qualtrics, SurveyGizmo, SurveyMonkey, and Typeform.

Thanks for reading. I look forward to any comments to the article and please don’t hesitate to reach out with any inquiries.

-Brent Chudoba