Three years ago I spent a lot of time looking at SaaS business intelligence companies. I loved what I saw in the demos: easy data connections, slick looking graphs, powerful drill down tools and custom dashboards made the tools look like no-brainers. And then I began my diligence calls. All of these bells and whistles were useful for data analysts I learned, but mostly worthless for regular users. Customers didn’t want to become data analysts, they wanted the software to do the work of the data analyst.

It then dawned on me that there’s a massive mismatch between the areas where vendors focus—namely graphics, dashboards, query and reporting tools—and the reality of customers’ needs. No one has time to dig through dashboards, graphs and reports. And customers don’t want to spend any time in your application unless they absolutely have to.

It turns out, this mismatch doesn’t just apply to business intelligence tools but to any software that manages data. Take Salesforce’s Sales Cloud for instance. It collects and manages a ton of data, but does very little to pro-actively analyze that data and provide insights. Wouldn’t it be better if Salesforce emailed you when it detected interesting insights instead?

This is exactly what I heard from customers. They want an email or SMS alerting them to an abnormal condition, stating the insight along with as much information as possible about the issue’s root cause. Here’s an example in a sales context:

We are projecting that you will miss your plan for Bookings this quarter.

Your Plan is $2.5m; Based on the data we have, we project Bookings will be $400k below plan.

This is because you have too few Opportunities in the pipeline given your historical conversion rate from Opportunities to Closed Deals (55%). The total of all Opportunities in the funnel that are projected to close this quarter is $3.8m.

Western Region appears to be the problem. More specifically, reps John XX and Kate XX are below their targets. Click here to see further information. (This is where you get to bring them back to your application with graphs and query tools to dig deeper.)

To some, this may sound like science fiction, but in reality it’s not that difficult to pull off. Without realizing it, we interact with smart software all the time. Amazon’s automatically recommends products we might like. Nest optimizes thermostat settings. VideoIQ even figures out when someone is about to commit a crime. The key is that all of these products anticipate what a user wants, and then do it automatically.

As all software developers will tell you, making things easy for the end user usually requires hard work by the developer. So what is required to build smart data analytics software that can automatically and proactively deliver insights to users?

1. Start with Application Focus

This step only applies to platform vendors: stating the obvious, to really provide great insights, platform vendors will need to focus on a specific application, and move beyond being a broad horizontal platform. That way, the information in the system becomes understandable. e.g. this data represents bookings, and is not just a bunch of numbers.

BI vendors get this, and have created a set of applications that are built on top of their platforms. Some of these are really good. But they are still short of what customers are hoping for.

2. Figure out the Important Moments

Most of the time, there is no need for a human to look at data, as everything is behaving as expected. This is one of the problems with dashboards: if you keep going back to them and there is nothing unusual to observe, you will soon stop using them. Customers want to be alerted when something problematic, or out of the ordinary has happened.

BI Tools usually provide alerting functions. But those that I have seen are too simplistic, and require the user to define the rules for what is an exception. Once again, the software is not being smart, and is expecting the user to do the work, instead of figuring out how to do that step for them.

Here are some initial thoughts for how to detect the unusual events which require human attention:

Baseline the data

Most data follows a pattern, and that pattern can be discerned over time and used as a baseline. Automated pattern recognition techniques can then be used to for anomaly detection when data deviates from established norms.

Look at Budgets or Forecasts

Many times there will be a budget or forecast for the data that can be used to determine what is the “normal” or “expected” behavior of the data. Then when things vary from that, create an alert.

Use Application knowledge to determine what is abnormal

In many situations, simple knowledge of the application will help the software developer recognize what is abnormal data. For example in storage management, you would know that when your disk is nearing 90% of capacity, that is an alert condition.

Send regular updates when nothing is abnormal

So far we have only talked about alerting when there is abnormal activity. But in most applications it is also useful to be told at regular intervals that nothing is going wrong. By itself, this absence of any problems is actually an insight.

This is best accomplished with a regular email to let them know what data is being monitored and that there are no exceptions. Perhaps this takes the form of a list of high level data items with a Green status light and possibly a graph or numeric value. As an example, it would be nice to get a monthly email from your accounting software to tell you that bookings, revenue, margins, expenses, and cash were all as expected, as opposed to just getting an email when they were significantly above or below plan.

3. Determine the Root Cause

If your software determines that bookings are about to miss plan, that is somewhat useful. But it immediately raises question: Why?

Normally to find the answer to that question, you would need to “drill down” into the bookings chart to figure out the root cause. Was it because one of your regions is underperforming, because a certain product didn’t meet the expected sales target, or because the overall sales productivity is lower than expected?

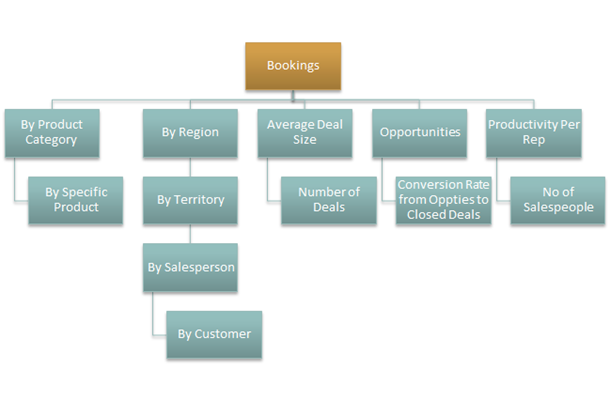

What we see from this is that most data is hierarchical in nature. For each application, there will be a small number of really important high level metrics. But behind each of these there will typically be a hierarchy of supporting metrics that help understand the root cause of a problem if the high level metric is abnormal.

Let’s take Profit as an example. If we missed our profit target, we would start looking at the following components to see where the problem had come from:

Then if we dive deeper in to Bookings, if there was a problem there, we might look at the following set of components:

Knowing this hierarchy makes it possible for smart analytics software to do the drill down for the customer instead of making them do the work.

4. Work with a Domain Expert to figure out the key Insights

In addition to using the Hierarchy to figure out what is important, it also makes sense to ask an executive in the application domain to walk you through the key insights they are after, and how they would go about diagnosing common problems.

If we were talking to a VP of Sales, they might tell you that they want to know the following:

- Am I going to miss the quarterly bookings number?

- And they would tell you how they would diagnose a problem by looking at contributing elements as I have attempted to show in the diagram above

- Is the pipeline being built so I am well placed to hit my number for the following quarter?

- Requires me to look at deals that are in earlier stage in the funnel

- Which of my sales people aren’t performing?

- Which part of the selling job is a problem for them (e.g. demos)? (Allows the VP to take remedial action such as additional training.)

- Who are the best sales people at that sales function (i.e. giving demos)? Use the best people at giving demos to training to the people that are having trouble with their demo to closed deal conversion rate.

- Which part of the selling job is a problem for them (e.g. demos)? (Allows the VP to take remedial action such as additional training.)

Once you know what they care about, work on setting up the metrics in such a way as to allow you to provide them with those Insights.

5. Can you also recommend a remedial action?

This may be a stretch, but in many cases it might also be possible to suggest remedial actions. For example in a sales application, your data may show that some market development reps are not getting a good connect rate to customers after sending an initial email (i.e. they are probably not doing good job at researching the customer and writing a compelling email). You might look for the reps that have a really high connect rate, and suggest that the sales manager consider using those reps to coach the problem reps.

Conclusion

If you are building software that generates, collects, or manages data in any way, ask yourself: Can customers easily gather insights from my data? There is a remarkable opportunity for us to build smarter software that gives customers what they want, it just takes a little more work.